Mar. 02, 2026

16 minutes read

Share this article

Last Updated March 2026

Every major leap in AI, from the first neural networks to today’s large language models, was designed, built, and directed by humans. Machines learn patterns from data. Humans determine which patterns matter, what goals are worth pursuing, and what lines should never be crossed. As AI grows more capable, the human role in shaping it doesn’t shrink. It becomes more consequential than ever.

“The day science begins to study non-physical phenomena, it will make more progress in one decade than in all the previous centuries of its existence.”— Nikola Tesla

We are living through what historians will likely call the most significant technological transition since the Industrial Revolution. The shift from rule-based computing — where humans coded every instruction explicitly — to machine learning systems that derive their own rules from data represents a fundamentally new relationship between humans and machines.

But this transition was not inevitable, nor is it autonomous. Every breakthrough in AI — deep learning, transformer architectures, reinforcement learning from human feedback — emerged from decades of sustained human intellectual effort. Researchers at universities and labs around the world posed the right questions, ran the failed experiments, and persisted through the winters when AI funding dried up and interest collapsed.

Traditional software followed deterministic logic: given input A, produce output B, every time, without exception. Machine learning inverted this model. Instead of programming rules, engineers now design systems that infer rules from examples — and humans provide those examples, label them, evaluate the outputs, and correct the errors. The machine learns; the human teaches.

This shift has implications far beyond technical architecture. It means that the values embedded in AI systems — what counts as a correct answer, what outcomes the system optimizes for, whose interests it serves — are human choices, made explicitly or implicitly at every stage of development. A hiring algorithm trained on historical data doesn’t just learn who was hired in the past. It learns to replicate the biases of whoever made those decisions. Human oversight is not optional. It is the only mechanism by which these systems can be corrected.

$15.7T Estimated AI contribution to global GDP by 2030 (PwC)

The narrative that AI will simply replace human workers misses how AI actually functions. Current AI systems are extraordinary pattern-matching engines — but pattern matching is only one component of intelligent work. The roles that remain stubbornly human are not relics of a pre-AI era. They are the roles that become more important as AI scales.

The most powerful AI systems are not the ones that maximize benchmark performance in isolation — they are the ones designed from the start to align with human needs, values, and limitations. Human-centric design is not a soft constraint added to AI development. It is the engineering discipline that determines whether a capable system is also a useful and safe one.

77% of workers say AI makes them more productive, not less (McKinsey, 2024)

Values like fairness, transparency, and privacy do not emerge automatically from AI training. They must be deliberately engineered — through the selection of training data, the design of reward functions, the choice of evaluation metrics, and the architecture of oversight mechanisms. An AI system that optimizes for engagement without a fairness constraint will learn to exploit psychological vulnerabilities. One that maximizes accuracy without a privacy constraint will happily memorize and reproduce personal data.

At Coderio, when our ML & AI Studio builds intelligent systems for clients, the ethical framework is established in the discovery phase — not the testing phase. Retrofitting values into a deployed AI system is dramatically harder and far more expensive than building them in from the start.

Automation is not a binary switch. The question is not “automate or don’t automate” — it is “which decisions should the human make, and which should the system make, and how should they interact at the boundary?” Getting this balance wrong in either direction is costly. Over-automating high-stakes decisions removes accountability and produces catastrophic edge-case failures. Under-automating routine tasks wastes human cognitive capacity on work that machines can do more consistently.

The human-in-the-loop principle: For decisions that are irreversible, high-stakes, or difficult to audit, the best-practice architecture keeps a human in the decision loop — not merely as a rubber stamp, but with sufficient context and authority to meaningfully override the system. This is not a limitation of current AI. It is a deliberate design choice that reflects what we know about how these systems fail.

Individual ethical choices by individual developers are insufficient when AI systems operate at scale. Organizations need formal frameworks: documented principles, bias audits, model cards that disclose training data and known limitations, red-teaming processes that actively search for failure modes, and governance structures that give accountability teeth. The EU AI Act, the NIST AI Risk Management Framework, and emerging industry standards are beginning to codify these requirements — but organizations that wait for regulation are already behind.

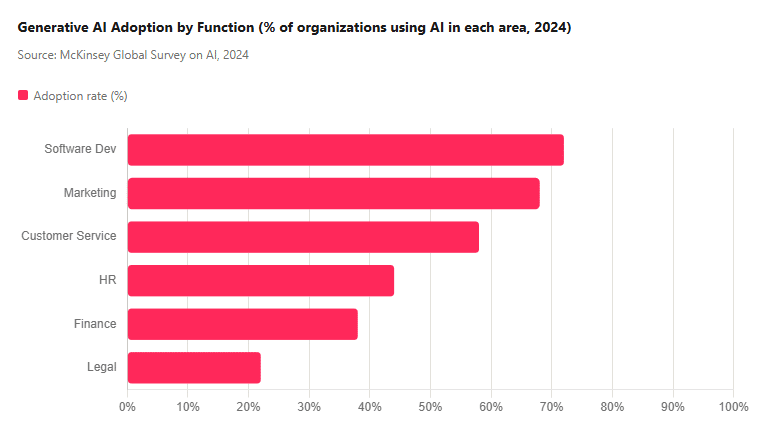

No development in AI has captured public imagination — or disrupted professional practice — as rapidly as generative AI. Tools capable of producing text, images, code, audio, and video from natural language prompts have placed creative capability in the hands of anyone with an internet connection. The question is not whether this changes creative work. It does, profoundly. The question is how.

Generative AI collapses the distance between intention and output. A designer who previously spent hours on layout iterations can now explore dozens of concepts in minutes. A developer can scaffold entire application features from a description. A marketer can draft, test, and refine copy at a pace previously impossible.

But the creative judgment — what to make, for whom, why, and whether it achieves its purpose — remains irreducibly human. AI does not experience the problem being solved. It does not understand the emotional resonance of a design choice for a specific audience. It does not carry the professional accountability for whether the work succeeds. Humans bring all of these things to the collaboration. The creative loop is: human intention → AI execution → human evaluation → refined intention. Remove the human from that loop and the output lacks direction.

Our research on generative AI in retail and generative AI in finance consistently shows that the highest-value applications are those where AI handles volume and variation while humans handle judgment and accountability.

Effective use of generative AI is itself a skill. Prompt engineering — the art of communicating precisely with AI systems to produce useful outputs — is now a legitimate professional capability. Understanding the failure modes of AI generation (hallucination, bias amplification, copyright risk) is essential for anyone using these tools professionally. And the meta-skill of knowing when not to use AI — when human judgment, emotional intelligence, or contextual nuance matters more than speed — may be the most important of all.

The AI era does not eliminate the need for deep technical expertise; it changes which technical skills are most valuable and who needs them. The democratization of AI tooling means that non-developers can now accomplish tasks that previously required engineering resources. But it also means that the engineers who understand how these systems work at a deeper level and can build, customize, audit, and fix them are more valuable, not less.

| Skill Category | Key Capabilities | Type | Demand Trajectory |

|---|---|---|---|

| ML Engineering | Model training, fine-tuning, evaluation, deployment pipelines | Technical | ↑ High growth |

| Data Engineering | Pipeline design, data quality, feature engineering, governance | Technical | ↑ High growth |

| Prompt Engineering | Effective communication with LLMs; RAG, chain-of-thought, evaluation | Hybrid | ↑↑ Fastest growth |

| AI Ethics & Governance | Bias auditing, risk assessment, policy development, compliance | Human | ↑↑ Fastest growth |

| Critical Thinking | Evaluating AI outputs, identifying failure modes, making judgment calls | Human | ↑ Increasing premium |

| Domain Expertise | Healthcare, legal, finance, engineering — contextual knowledge AI lacks | Human | → Stable; differentiator |

| Emotional Intelligence | Empathy, leadership, conflict resolution, stakeholder management | Human | Healthcare, legal, finance, and engineering — contextual knowledge AI lacks |

For those building AI systems, Python remains the dominant language of the ML ecosystem through libraries like PyTorch, TensorFlow, Hugging Face Transformers, and LangChain. For production deployments and data-intensive pipelines, languages like Go and Java remain important for performance-critical components. Cloud-native architectures using containers and serverless functions are increasingly the default deployment target for AI services.

The AI landscape evolves faster than any individual can track. The practical implication is not that everyone must become an ML engineer. It is that every knowledge worker needs a learning practice — a deliberate, regular investment of time in understanding what AI can and cannot do, which tools are maturing vs. still experimental, and how the technology is changing the specific domain they work in. Organizations that build learning cultures will compound this advantage. Those who treat AI literacy as a one-time training event will fall behind.

The organizations that will extract the most value from AI are not the ones that deploy it fastest. They are the ones that deploy it most thoughtfully — with clear frameworks for where AI augments human work, where it takes over routine tasks, and where human judgment must remain primary.

Not every process benefits equally from AI. The highest-ROI AI applications share a common profile: high-volume, pattern-driven tasks with measurable outcomes and abundant training data. Customer support triage, document processing, code review assistance, and predictive maintenance are examples where AI augmentation consistently delivers. High-stakes, low-volume decisions — executive strategy, crisis management, novel ethical dilemmas — are poor candidates for automation, regardless of how capable the underlying models become.

Our work on digital transformation consistently shows that the most expensive AI mistakes come from applying automation to the wrong problems, not from imperfect models applied to the right ones.

Effective human-AI collaboration requires explicit design. What decisions does the AI make autonomously? Which does it recommend for human review? What information does the human need to make a meaningful override? How does disagreement between human and AI judgment get resolved and logged? Organizations that leave these questions implicit discover their answers only after something goes wrong.

The technical challenges of AI integration are generally solved faster than the human ones. Resistance to AI adoption is rarely irrational — it often reflects legitimate concerns about job security, loss of skill-based identity, or justifiable skepticism about AI reliability in specific contexts. Organizations that dismiss these concerns as obstacles to progress tend to undermine the very trust that effective human-AI collaboration depends on.

Change management reality check: A 2024 Gartner survey found that 56% of AI initiative failures are attributed to people and process challenges — not technology limitations. The most sophisticated AI deployment still fails if the humans expected to work alongside it don’t trust it, don’t understand it, or weren’t involved in designing how it fits their work.

The most important question about AI and employment is not “how many jobs will AI eliminate?” It is “what kind of work do we want humans to do, and how do we design AI systems that create more of that work, not less?”

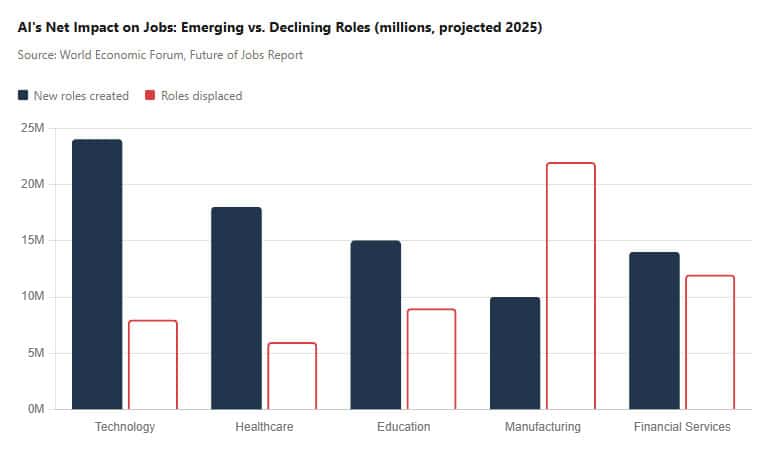

The World Economic Forum’s Future of Jobs Report projects that while AI will displace 85 million jobs by 2025, it will simultaneously create 97 million new roles — roles that tend to be higher-skilled, more cognitively engaging, and better compensated than those displaced. The challenge is the transition: the displaced and the created are not always the same people, locations, or skill profiles.

Historical analysis of previous automation waves — from agricultural mechanization to industrial robotics to software automation — consistently shows the same pattern: routine, explicitly definable tasks get automated; tasks requiring contextual judgment, emotional intelligence, and creative adaptation command increasing premiums. AI is following this pattern, but operating across a much wider range of task types, including some cognitive tasks previously assumed to be automation-proof.

The implication for individuals and organizations is that investing in deeply human capabilities — critical thinking, creativity, empathy, ethical judgment, cross-disciplinary synthesis — is not a romantic response to technological change. It is the rational economic strategy for the AI era.

The competitive advantage from AI will not come from access to models — those are increasingly commoditized. It will come from the quality of the human judgment applied to AI outputs, the richness of the proprietary data that those models can be trained on, and the organizational culture that enables humans and AI to collaborate effectively. Coderio’s approach to nearshore software development is built on exactly this insight: pairing world-class engineering talent with deep domain knowledge, enabling clients to build AI-powered products that reflect genuine human expertise, not just model capability.

Humans play multiple irreplaceable roles: designing and training models, curating training data, setting ethical guidelines, interpreting outputs, and making high-stakes decisions requiring contextual judgment. AI amplifies human capability but depends on human oversight to function safely and effectively.

AI will automate many routine tasks, but wholesale replacement of human workers is unlikely. The World Economic Forum estimates AI will displace 85 million jobs by 2025 while creating 97 million new ones. The roles that grow will require uniquely human skills — creativity, critical thinking, empathy, and ethical judgment — that AI currently cannot replicate.

The most valuable human skills in the AI era combine technical fluency (Python, machine learning fundamentals, prompt engineering) with deeply human capabilities (critical thinking, emotional intelligence, creative problem-solving, ethical reasoning). The ability to collaborate effectively with AI tools — knowing when to trust them and when to override them — is itself an emerging core competency.

Human oversight prevents AI systems from perpetuating bias, making ethically unsound decisions, or optimizing for the wrong objectives. AI models learn patterns from historical data, which reflects existing human biases and limitations. Without human review and correction, AI systems can amplify inequalities and produce harmful outcomes at scale — and they do so faster and at larger scale than any human error could.

Start by identifying high-volume, pattern-driven processes where AI augmentation delivers clear value, and explicitly define where human judgment must remain primary. Build collaboration frameworks that intentionally design the human-AI boundary, not by default. Invest in change management as seriously as in the technology itself — most AI initiative failures are people- and process-related, not technology-related.

Coderio’s nearshore engineering teams combine deep AI expertise with human-centered design principles across every engagement. Talk to Our Team

Explore more from Coderio on AI, innovation, and the human-technology relationship:

Generative AI for Healthcare: From Pilot to Patient Impact

Generative AI in Finance: Use Cases, ROI & What Comes Next

How Retrieval-Augmented Generation Works in Production Systems

Machine Learning & AI Studio: Harness the Transformative Power of AI

As Cofounder and Executive Chairman of Coderio, Joaquin is the driving force behind the company’s organizational culture and principles. He provides strategic leadership and direction while focusing on the continuous improvement of Coderio’s services. Joaquin holds a bachelor’s degree in information technology, studies in business administration, and is a thought leader in the software outsourcing industry. He has a wealth of experience in creating innovative technological products and is a profoundly passionate leader and a natural motivator, always offering endless support to create opportunities for talented people to thrive.

As Cofounder and Executive Chairman of Coderio, Joaquin is the driving force behind the company’s organizational culture and principles. He provides strategic leadership and direction while focusing on the continuous improvement of Coderio’s services. Joaquin holds a bachelor’s degree in information technology, studies in business administration, and is a thought leader in the software outsourcing industry. He has a wealth of experience in creating innovative technological products and is a profoundly passionate leader and a natural motivator, always offering endless support to create opportunities for talented people to thrive.

Accelerate your software development with our on-demand nearshore engineering teams.