Feb. 09, 2026

15 minutes read

Share this article

Building production-grade AI used to require a specialized data science team, months of infrastructure setup, and significant capital expenditure before a single prediction was made. AI as a Service changed that equation. Today, a mid-sized company can embed natural language processing, computer vision, or predictive analytics into its products within days, paying only for what it uses.

But AIaaS is not a one-size-fits-all answer. Vendor lock-in, data privacy obligations, and the ceiling on customization are real constraints that can sink an AIaaS project if not anticipated. This guide covers everything: what AIaaS is, how the market is growing, what it genuinely delivers, where it falls short, and how to evaluate whether it fits your organization.

$57B+ Global AIaaS market projected by 2028, up from $15B in 2023 (CAGR ~31%)

AI as a Service (AIaaS) is a cloud delivery model in which third-party providers — primarily AWS, Microsoft Azure, and Google Cloud — make AI capabilities accessible via APIs, pre-trained models, and managed ML platforms. Businesses consume these capabilities on a subscription or usage basis without owning, operating, or maintaining the underlying infrastructure or training pipelines.

Definition: AIaaS = AI capabilities (NLP, computer vision, prediction, generation) delivered over the cloud as API-accessible services, billed on usage. The provider handles infrastructure, model training, scaling, and updates.

The analogy to cloud computing broadly is useful: just as IaaS eliminated the need to own physical servers, AIaaS eliminates the need to build and maintain AI models. Businesses interact with a well-documented API endpoint and receive a structured prediction, classification, or generated output in return.

This is distinct from hiring machine learning engineers to build custom models, though the two approaches can be combined: a business might use AIaaS for commodity AI tasks (sentiment analysis, translation, OCR) while investing in custom models only where competitive differentiation demands it.

63% of companies cite cost savings as their primary reason for choosing AIaaS over in-house AI development.

At a technical level, AIaaS follows a consistent pattern regardless of the provider or capability being accessed.

For more advanced use cases, some AIaaS platforms also offer fine-tuning workflows, where you provide your own labeled data to adapt a base model to your specific domain, without building from scratch. This sits between pure off-the-shelf AIaaS and fully custom data science and ML development.

6x Faster time to first AI deployment when using AIaaS versus building a custom model from scratch.

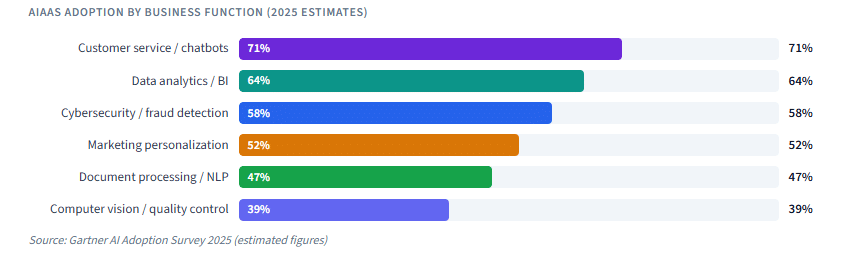

AIaaS covers a broad range of capabilities. The six categories below account for the vast majority of enterprise usage.

77% of enterprises are actively using or piloting AIaaS in at least one business function

Training a production-grade machine learning model requires large labeled datasets, ML engineering expertise, GPU infrastructure, and months of iteration. AIaaS removes all of that. A development team with no prior ML experience can integrate a sentiment analysis or image recognition API in an afternoon. For companies exploring AI for the first time, this dramatically lowers the cost and risk of the first experiment.

Rather than committing to GPU clusters or ML platform licenses upfront, AIaaS billing is tied directly to consumption. A company processing 10,000 API calls per month pays for 10,000 calls — not for idle capacity. This maps AI costs directly to business activity and makes ROI calculation straightforward.

The frontier models powering AIaaS platforms (GPT-4o, Gemini 1.5, Claude 3.5) represent billions of dollars of research investment and petabytes of training data. No individual company, except the handful of hyperscalers, could replicate them independently. AIaaS gives every business access to the same capability, creating a significant equalizer between large enterprises and smaller competitors.

When a provider improves their model, every API consumer benefits automatically. There are no retraining cycles, no deployment windows, and no model drift management for the consuming business. This is a material operational advantage over maintaining custom models.

AIaaS platforms handle millions of concurrent requests. Whether a company goes from 1,000 to 10 million daily API calls, the infrastructure scales transparently. This is particularly valuable for businesses with unpredictable or seasonal demand spikes.

Because the model is already trained and the API is documented, development time shrinks from months to days. This speed advantage is critical in competitive markets where being first to deploy an AI-powered feature can determine market positioning. Teams at Coderio working on digital transformation projects consistently find that AIaaS integrations cut AI feature delivery timelines by 60 to 80 percent compared to custom model development.

The advantages are real, but AIaaS introduces a distinct set of risks that every organization should evaluate before committing to a provider.

AIaaS integrations become embedded in product code. Switching providers requires rewriting API calls, adapting to different input/output schemas, retraining any fine-tuned components, and revalidating outputs. The deeper the integration, the higher the switching cost. Mitigating this requires abstracting AI calls behind an internal interface layer from the start, so the underlying provider can be swapped without touching application logic.

Pre-trained models are optimized for general use cases. A healthcare company processing highly specialized clinical notes, or a legal firm analyzing jurisdiction-specific contract language, will quickly hit the ceiling of what a generic NLP model can do accurately. Fine-tuning helps, but for problems where domain accuracy is critical, custom model development may be the only viable path.

Sending data to a third-party API means that data leaves your environment. For industries governed by GDPR, HIPAA, CCPA, or financial regulations, this requires careful contractual and technical controls. Many providers offer data processing agreements, private deployment options, and regional data residency guarantees — but these must be verified before any sensitive data is processed. See the dedicated security section below for a full treatment of this.

AIaaS pricing is attractive at low volumes and during pilots. At production scale, especially with high-frequency or token-intensive use cases like LLM completions, costs can grow significantly faster than anticipated. Implementing usage monitoring, budget alerts, and token optimization strategies from day one is essential.

Cost Warning

A common trap: a pilot consuming 50,000 tokens per day costs pennies. The same application at 50 million tokens per day costs thousands of dollars monthly. Always model costs at 100x your pilot volume before committing to an architecture.

Standard AIaaS tiers share compute resources across many customers. During peak demand periods, latency can increase. For latency-sensitive production applications, dedicated throughput tiers (AWS Provisioned Throughput, Azure PTU, OpenAI usage tiers) are available, but at a significant premium.

The provider landscape is dominated by the three hyperscalers plus a growing tier of specialized AI API companies. The right choice depends on your existing cloud footprint, the AI capabilities you need, your data governance requirements, and your team’s expertise.

| Provider | Core AI Strengths | Best For | Data Residency Options | Fine-tuning Available |

|---|---|---|---|---|

| AWS (Amazon) | Broadest service catalog: Rekognition, Comprehend, Forecast, Bedrock (LLMs), SageMaker | Enterprises already on AWS; full ML lifecycle | Yes (multiple regions) | Yes (SageMaker, Bedrock) |

| Microsoft Azure | Azure AI Services, OpenAI Service, Cognitive APIs, Document Intelligence | Microsoft-stack organizations; regulated industries | Yes (data boundary controls) | Yes (Azure OpenAI) |

| Google Cloud | Vertex AI, Vision AI, Natural Language API, Gemini API, AutoML | Data-heavy workloads; multimodal AI use cases | Yes (Data Residency add-on) | Yes (Vertex AI) |

| OpenAI API | GPT-4o, o1/o3, DALL-E, Whisper, Embeddings | Generative text, code, image; developer-first integrations | Limited (Enterprise option) | Yes (GPT fine-tuning) |

| Anthropic (Claude API) | Claude 3.5/4 family; long context; document analysis | High-accuracy text tasks; safety-critical applications | Via AWS Bedrock | Limited (coming) |

| Hugging Face | Open-source model hub; Inference Endpoints; Spaces | Teams wanting model portability; avoiding proprietary lock-in | Yes (dedicated endpoints) | Yes (full control) |

Selection Tip

If your organization already has significant infrastructure on one hyperscaler, start there. The IAM integration, VPC connectivity, and billing consolidation alone justify preferring native AI services before evaluating specialized providers.

AIaaS delivers measurable value across a wide range of industries. The examples below represent deployments where the speed and cost advantages of AIaaS are most pronounced.

For many organizations, data governance is the deciding factor in AIaaS adoption. Understanding exactly what happens to your data when it passes through a third-party AI API is not optional — it is a compliance requirement.

| Question | Why It Matters | What to Look For |

|---|---|---|

| Is my data used to train future models? | Data sent to APIs may improve the provider’s models; opt-out is critical for proprietary data | Explicit opt-out in DPA or Enterprise agreement |

| Where is data processed and stored? | GDPR requires EU data to stay in the EU; HIPAA requires US-based processing for PHI | Regional endpoint options; data residency guarantee |

| Who can access my data at the provider? | Provider staff access policies affect compliance certifications | Zero-employee-access guarantees or audit log access |

| What certifications does the provider hold? | SOC 2 Type II, ISO 27001, HIPAA BAA, PCI DSS are baseline expectations | Up-to-date certifications listed in Trust Center |

| What happens to data in transit and at rest? | Encryption standards determine exposure risk if infrastructure is compromised | TLS 1.2+ in transit; AES-256 at rest minimum |

Beyond contractual controls, engineering teams can reduce data exposure through several architectural choices: sending only the minimum data required to complete the AI task (data minimization), anonymizing or pseudonymizing inputs before sending them to external APIs, and using private deployment options or on-premises AIaaS offerings like Azure AI on Azure Stack, AWS Outposts, or self-hosted models via Hugging Face Inference Endpoints when regulatory requirements demand it.

For organizations requiring the highest level of control, Coderio’s Machine Learning and AI Studio can design hybrid architectures that combine AIaaS for non-sensitive workloads with on-premises or private cloud inference for regulated data.

Choose a single use case where success is clearly measurable: a support ticket classification system, a product description generator, or a document summarizer. Run for 60 to 90 days, measure accuracy and business impact, then decide whether to expand. Avoid rolling out AIaaS broadly across multiple workflows simultaneously before validating quality.

Never call an AIaaS API directly from every part of your codebase. Create an internal AI service layer that other systems call. This layer handles authentication, error handling, retry logic, caching, and — critically — allows you to swap providers or route to fallback models without touching application code. This is the single most important architectural decision for long-term AIaaS flexibility.

Set up budget alerts and usage dashboards from day one. Track the cost per unit of business value (e.g., cost per resolved ticket, cost per document processed) rather than raw API costs alone. Implement token budgets for LLM-based features and log all high-cost invocations for optimization review.

AIaaS models are probabilistic — they produce good outputs most of the time, not all of the time. Establish a ground truth evaluation dataset for your specific use case, run new model versions against it, and set minimum accuracy thresholds before deploying updates. Never assume a model version update from the provider has maintained or improved your use-case-specific performance.

Map every AIaaS integration in your data processing register. Record what data is sent, which provider receives it, under which legal basis, and how long it is retained. This documentation is required for GDPR data protection impact assessments and is frequently requested by enterprise customers during vendor due diligence.

Governance Tip

Treat AIaaS providers the same as any other third-party data processor: perform annual reviews of their security certifications, monitor their incident disclosure history, and ensure your Data Processing Agreement is current before processing any regulated data.

The choice between AIaaS and custom model development is not binary. Most mature AI strategies use both. The table below maps specific signals to a recommended approach.

| Signal | Recommended Approach | Reasoning |

|---|---|---|

| Commodity AI task (translation, OCR, sentiment) | AIaaS | Pre-trained models perform well; no differentiation from custom training |

| No in-house ML expertise | AIaaS | Removes the primary barrier; expertise not required to consume an API |

| Proof of concept or MVP phase | AIaaS | Speed and low cost allow rapid validation before committing to infrastructure |

| Highly specialized domain (clinical, legal, industrial) | Fine-tuning or custom | Generic models often lack domain accuracy; fine-tuning on proprietary data improves performance |

| AI is a core competitive differentiator | Custom with AIaaS components | Competitors access the same AIaaS models; differentiation requires proprietary training |

| Strict data sovereignty requirements | Private deployment or on-prem | Standard AIaaS tiers may not satisfy regulatory data residency rules |

| Very high, sustained inference volume | Evaluate TCO carefully | At scale, running open-source models on owned infrastructure can undercut AIaaS pricing |

| Mature AI team and labeled proprietary dataset | Custom model development | Proprietary data and expertise are competitive assets; AIaaS would commoditize the advantage |

For most organizations early in their AI journey, AIaaS is the right starting point. It delivers real value quickly, proves ROI before significant investment, and builds organizational understanding of what AI can and cannot do. The path to custom model development, where warranted, runs through AIaaS experience, not around it.

If you are evaluating how AIaaS fits into a broader digital transformation strategy, or want to understand how to integrate AI capabilities into your existing cloud architecture, the frameworks above provide a solid starting point for that conversation.

Coderio’s AI and ML Studio helps companies design, integrate, and govern AI-as-a-Service implementations across AWS, Azure, and Google Cloud.

From initial provider selection to production deployment and ongoing optimization. Talk to our AI team

Leandro is a Subject Matter Expert in Backend at Coderio, where he focuses on modern backend architectures, AI-assisted modernization, and scalable enterprise systems. He contributes technical thought leadership on topics such as legacy system transformation and sustainable software evolution, helping organizations improve performance, maintainability, and long-term scalability.

Leandro is a Subject Matter Expert in Backend at Coderio, where he focuses on modern backend architectures, AI-assisted modernization, and scalable enterprise systems. He contributes technical thought leadership on topics such as legacy system transformation and sustainable software evolution, helping organizations improve performance, maintainability, and long-term scalability.

Accelerate your software development with our on-demand nearshore engineering teams.